Home » Publications » Benchmarking Foundation Evaluation Practices 2020

The most comprehensive review of evaluation and learning practices at foundations, this report offers benchmarking data on foundation evaluation practice collected in 2019 from 161 foundations. It makes comparisons to results from 2015, 2012, and 2009.

Philanthropy is grappling with core questions about its very purpose and nature: What does it mean to support equity and to act equitably? What do the exploitative roots at the source of many philanthropic institutions mean for their missions and approach? Does the current philanthropic conception of “strategy” support the kind of thinking about systems and complexity that is needed to achieve foundation goals?

At the same time, foundations are experiencing constant organizational churn. Regular revisiting and changes to their leadership, goals, strategies, and structure are the norm rather than the exception.

With this as context, we need to ask ourselves tough questions about whether current foundation evaluation practices are set up to meet the sector’s current challenges.

- If we really care about equity, what does it require of evaluation and learning functions and investments?

- If we take complexity seriously, are we setting ourselves up to work effectively with complex challenges that require systemic and emergent approaches?

- If we accept that change in the sector is constant, what does it look like to make and support decisions against a backdrop of ongoing uncertainty and flux?

In 2019, the Center for Evaluation Innovation administered a benchmarking survey to collect data on evaluation and learning practices at foundations.

This is an ongoing effort (previous surveys were conducted in 2015, 2012, and 2009) to understand evaluation functions and staff roles; the level of investment in and support of evaluation; the specific evaluative activities foundations engage in; the evaluative challenges foundations experience; and the use of evaluation information once it is collected.

The survey was sent to 354 independent and community foundations in the US and Canada reporting at least $10M in annual giving during the previous fiscal year, and to foundations that participate in the Evaluation Roundtable network (the vast majority of which meet the annual giving criterion). This report includes survey data from 161 foundations, a 45% response rate.

We conduct the survey so that foundations can compare their evaluation and learning structures and practices to those of the broader sector. The results offer a point-in-time assessment of sector practice. They do not necessarily represent “best” or even “good” practice. They do, however, offer valuable inputs on key questions, such as: How should the evaluation and learning function be staffed and resourced? What kinds of evaluative activities should be prioritized? How can evaluation and learning link to strategy?

Some of the benchmarking findings confirm what we already thought about evaluation and philanthropy. Some are surprising and new.

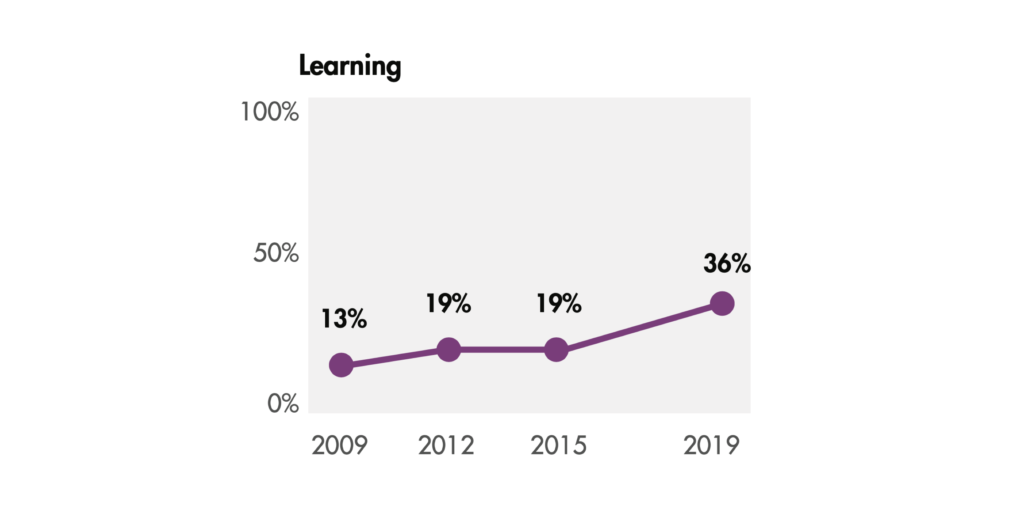

Confirming: Over time, more job titles for foundation evaluation leaders include the word “learning,” but fewer include the word “evaluation.”

The portion of leaders with learning in their titles climbed from 13% in 2009 to 36% in 2019 (page 6). Foundations are prioritizing learning as distinct from evaluation, putting real resources behind it by staffing that function internally.

The way that foundations support learning looks different across the sector. For example, a key differentiating factor we’ve found in the past is whether a foundation leads with learning or leads with evaluation.

In addition, some foundations are cutting the word “evaluation” from foundation evaluation leaders’ job titles and from unit names entirely. For evaluation units that don’t include the word “evaluation,” the word “strategy” is often included. What does this signal about our attitudes towards evaluation? Should we be concerned that foundations are learning and formulating strategy without support from evaluation and data? Or, is this an effort to make the evaluation function more palatable to—and integrated with—program staff?

Foundation staff are the primary intended users of evaluation efforts, over grantees and others in the field. Yet the biggest evaluation challenge faced is having evaluations result in meaningful insights for the foundation.

Many foundations talk about sharing their learning broadly with peers, partners, and the public. But we know that foundations primarily share their evaluation findings internally, with foundation staff, the CEO, and the board. This has not changed since 2017, and we suspect this has been the case for much longer.

Have any foundations actually cracked the nut of learning in partnership with grantees and others in a way that drives change in a co-created or co-owned way? While we hope the answer is yes, given that external audiences are still not prioritized as an audience for evaluation findings, we have doubts.

Why does evaluation use continue to be such a major challenge, especially if foundations are prioritizing themselves as users? Is it a different understanding between evaluators and program staff about what questions need to be answered? Is it a mismatch between evaluation approach and foundation learning and decision making needs? The answer is likely both and more.

Surprising and new: In 2019, there was 1 evaluation staff person per almost 16 program staff. This ratio has widened since 2015, when there was 1 evaluation staff person for every 10 program staff.

Foundation evaluation staff are loaded with responsibilities—over 50% of respondents reported they spent time on all 11 of the distinct evaluation responsibilities we asked about, and 11% reported additional responsibilities we hadn’t listed (page 12). Against this backdrop of wide-ranging responsibilities, some foundation evaluation departments are either losing staff or are staying the same size while the rest of the foundation is growing. What does this mean for the depth and quality of evaluation and learning work?

It is more important than ever for foundations to consider which evaluation and learning activities should be prioritized. Are foundation evaluation staff equipped (and supported by leadership) to make these choices? What do different prioritizations mean for evaluation staffing, for how data and evidence are collected and used, and for the way evaluation staff work with other foundation staff?

40% of evaluation staff at foundations are people of color.

Although we did not collect data in this survey about the specific position of staff of color within the evaluation unit, we know through our work with the Evaluation Roundtable that the representation of people of color in evaluation leadership (e.g., the Director or VP roles) is not as high as this percentage suggests. While more recent evaluation director hires are people of color, our experience suggests that foundation evaluation staff who are people of color generally occupy more junior positions.

We believe the diversification of this role is critical for philanthropy’s ability to evaluate and learn effectively and in ways that advance equity. We also believe that we have a collective responsibility for ensuring that this diversification extends to positions of power, with tangible control over the direction of the evaluation department and of the foundation itself.

What are foundations doing to get and keep staff of color on their teams and to support them for positions of leadership? How can philanthropic institutions align their diversity, inclusion, and equity efforts with their evaluation and learning investments?

Boards are overall supportive of evaluation, but senior leadership behaviors in support of evaluation and learning fall short.

Over 73% of foundation boards are moderately or highly supportive of foundation spending on evaluation (though we do not know if this is because they are staying within their relatively small budgets–see pages 14-15). 89% of boards are moderately or highly supportive of foundation staff using evaluation or evaluative data in their decision making.

Having those types of signals is important for encouraging evaluative thinking across a foundation. But real modeling—the board also using evaluative data in their decision making—is a next step that many foundations have yet to successfully take.

Foundation senior management also communicate support for evaluation, but are not performing when it comes to their leadership behaviors around evaluation. For example, a majority of respondents reported that senior management engagement with evaluation was poor or fair in supporting adequate investment in the evaluation capacity of grantees (67%) and in modeling the use of information resulting from evaluation work in decision making (57%).

For leadership, then, there can be a disconnect between communicating to staff the value of using evaluation and actually walking the talk themselves. Many foundation evaluation leaders will recognize this as a longstanding challenge. What will it take to influence foundation leadership to truly shift their own evaluative practice?

Our intent is that foundations use these findings to critically assess and reflect on their own practices. The report is to help the sector focus its evaluative energy in a way that increases its ability to achieve results.

We look forward to hearing what others find most interesting and how these results can be interpreted from different angles. Share your thoughts with us and with others. If you’re a tweeter, please tweet about the Benchmarking Report using #FndnEvalBench2020.